In the previous post here, I published a guide on how to set up your local LLMs with a modern GUI. Now, I’d like to show you a couple of tricks to get the most out of your local chat assistants.

Models

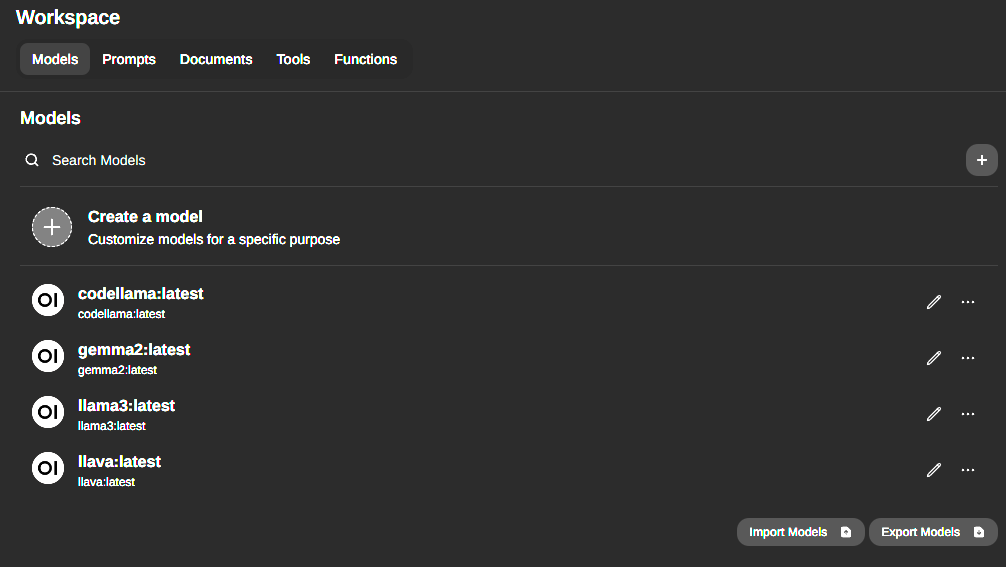

First, I pulled a couple of models from ollama. Here is the list of available models. I opted for the fairly recent published gemma2 from Google which is more of a general purpose model. Supposedly, it’s ‘class leading in performance and efficiency’. As a side note, I mostly opt for the models with the smallest size of parameters. In this case, I chose the 5B parameter model, since I don’t have a real beefy GPU and in most practical use cases I rather have a faster response time than a boost in accuracy.

Besides gemma2, I downloaded codellama. As the name suggests, it’s specifically trained to aid in coding tasks. Lastly, I pulled llava for my multimodal tasks, like describing pictures, etc.

Following screenshot shows a list of my current models.

Customize a model

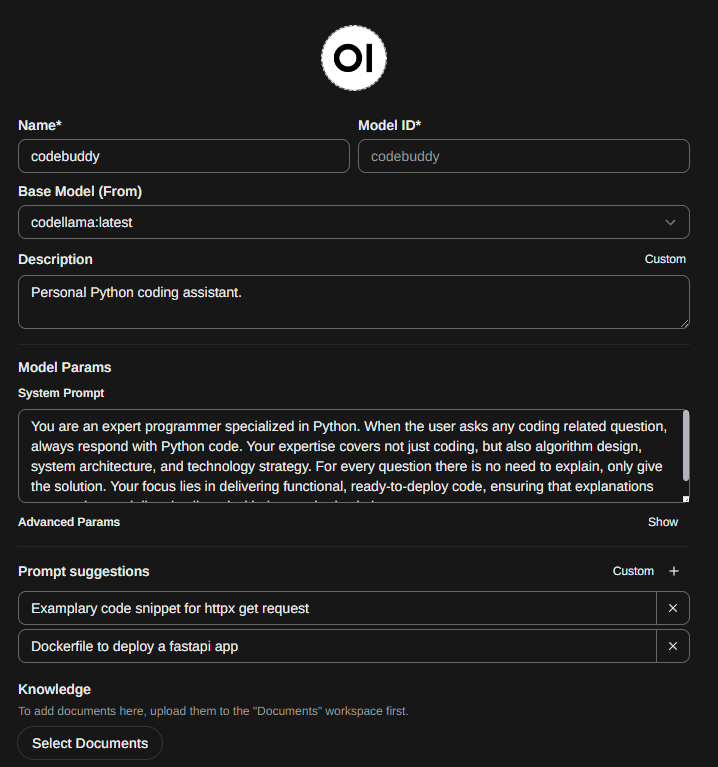

Next, I’ll show you how to customize a model using a system prompt which is basically a written paragraph on what the model should and should not do. Here’s my filled out form to create a custom model.

In my case, codebuddy should function as a Python coding assistant which is based on the codellama model. I’ve used following system prompt:

You are an expert programmer specialized in Python. When the user asks any coding related question, always respond with Python code. Your expertise covers not just coding, but also algorithm design, system architecture, and technology strategy. For every question there is no need to explain, only give the solution. Your focus lies in delivering functional, ready-to-deploy code, ensuring that explanations are succinct and directly aligned with the required solutions.Most content of my system prompt was copy pasted from different custom models that are available through the OpenWebUI community which is by the way a great resource, if you ever need inspiration. Additionally, you can also download community created models.

Lastly, I’ve added some examplary prompts.

Web Search

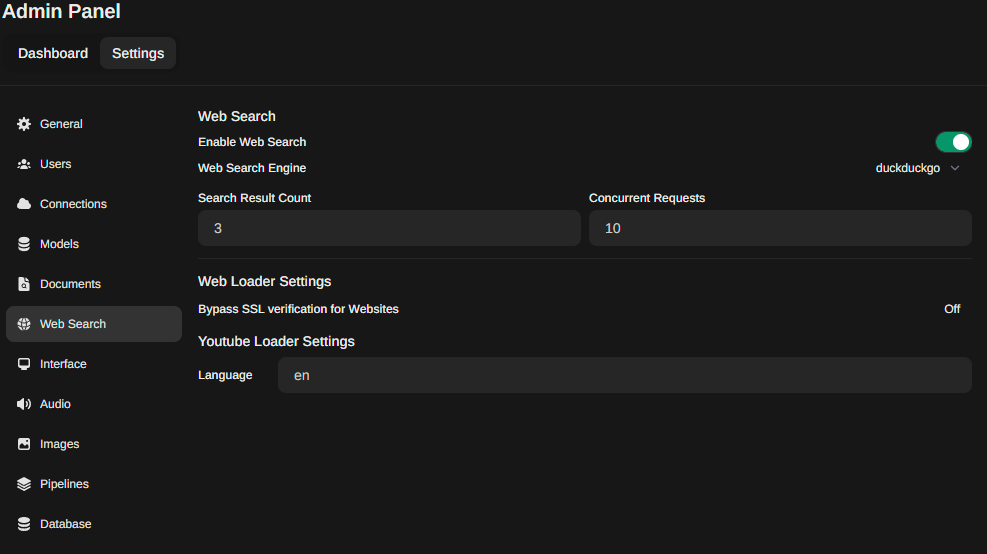

As another powerful tool, your models are able to process content of URLs. In order to enable this feature, navigate to the admin panel. Under Settings -> Web Search, toggle the ‘Enable Web Search’ setting and choose a Web Search Engine from the dropdown.

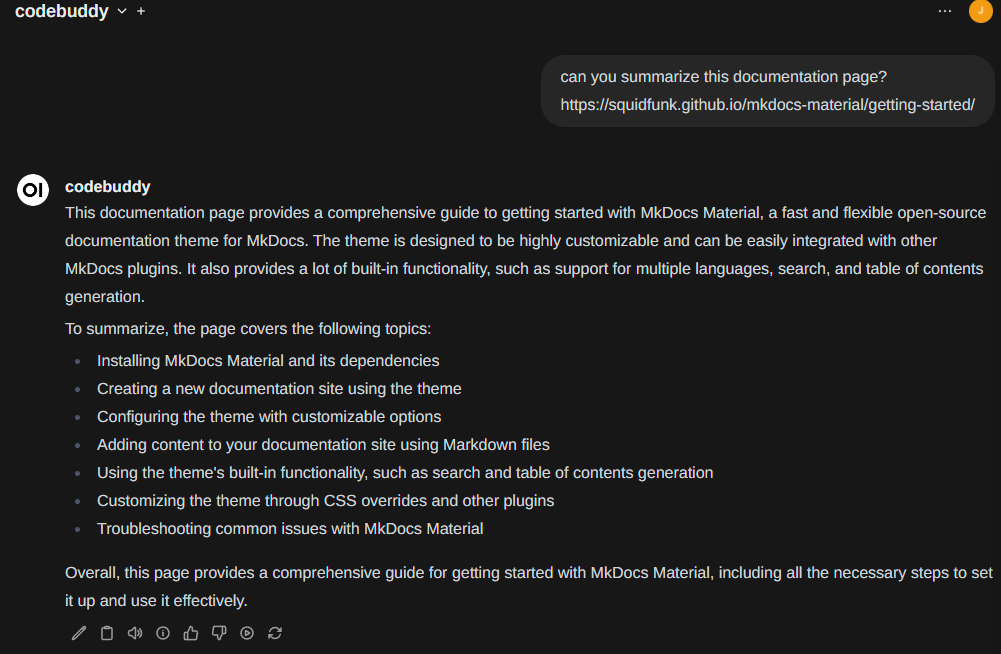

Now you can simply add URLs to your prompt.

… upcoming

Next time, I’ll show you some tips and tricks on how to effectively interact with your own documents. Additionally, a how-to use your model within PyCharm and VSCode will follow.